Introduction to DORA Metrics

DevOps Research and Assessment (DORA) metrics provide key insights into software delivery and operational performance. They have become an industry standard in evaluating and improving IT performance.

Origins of DORA Metrics: They were born out of research conducted by experts, including Jez Humble, Nicole Forsgren, and Gene Kim, who aimed to pinpoint the most significant metrics to drive performance. DORA metrics are pivotal in the IT industry. They provide an empirical framework to gauge the performance of software delivery and operation. Their influence on business performance is significant - higher DORA metrics are linked with better organizational and financial performance.

The DORA Metrics: Explained

The four DORA metrics are Lead Time, Deployment Frequency, Change Fail Rate, and Mean Time to Recovery.

- Lead Time: This measures the time it takes for code changes to go from version control to production.

- Deployment Frequency: This quantifies how often an organization successfully deploys to production.

- Change Fail Rate: This measures the proportion of deployments that cause a failure in production.

- Mean Time to Recovery (MTTR): This metric captures the time it takes for a system to recover from a failure.

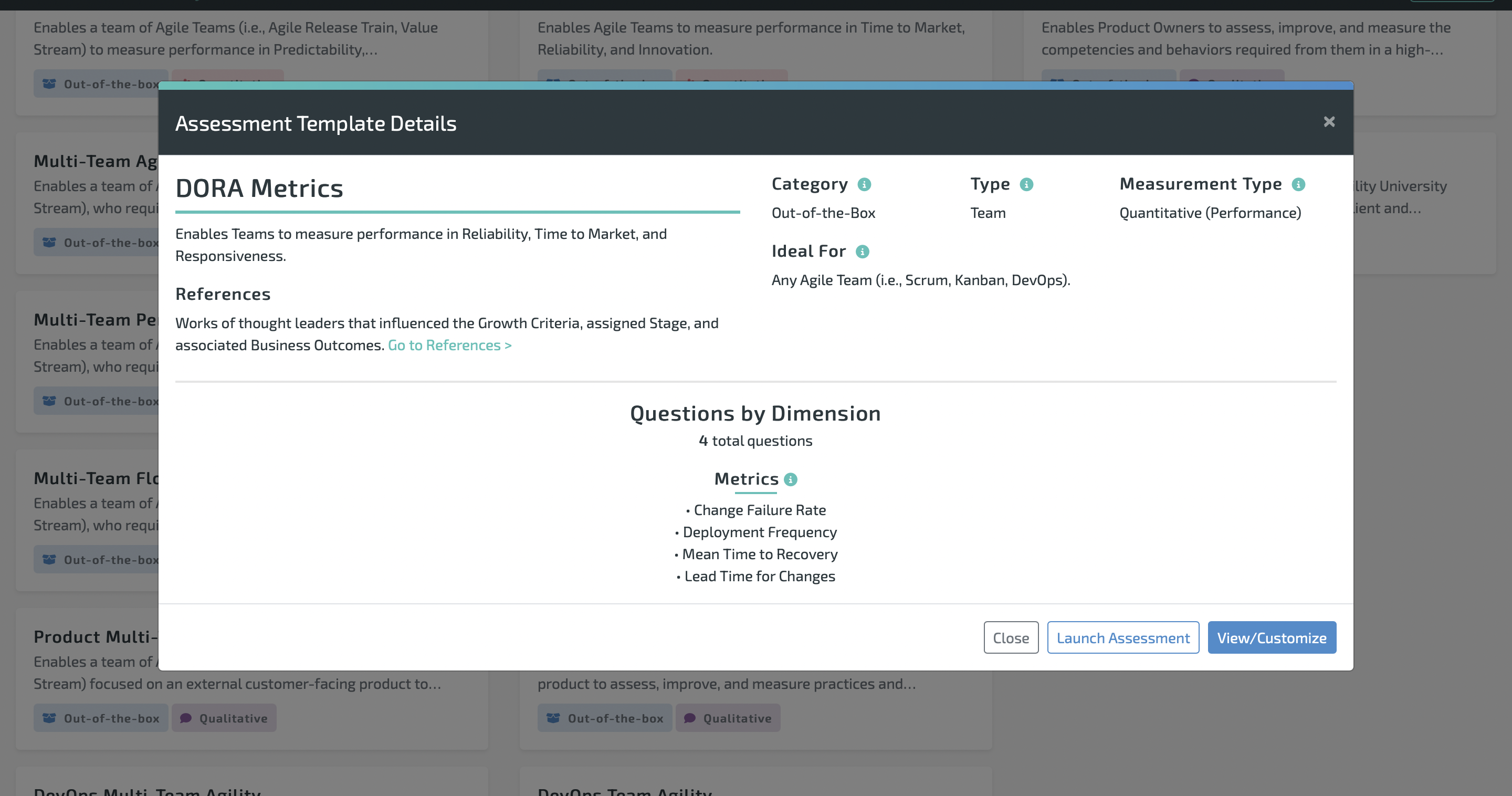

Lean Agile Intelligence provides an out-of-the-box, fully customizable DORA Metrics assessment that you can use to drive your team and company's performance. Click here to take a sample DORA assessment or learn more about our DORA assessment template.

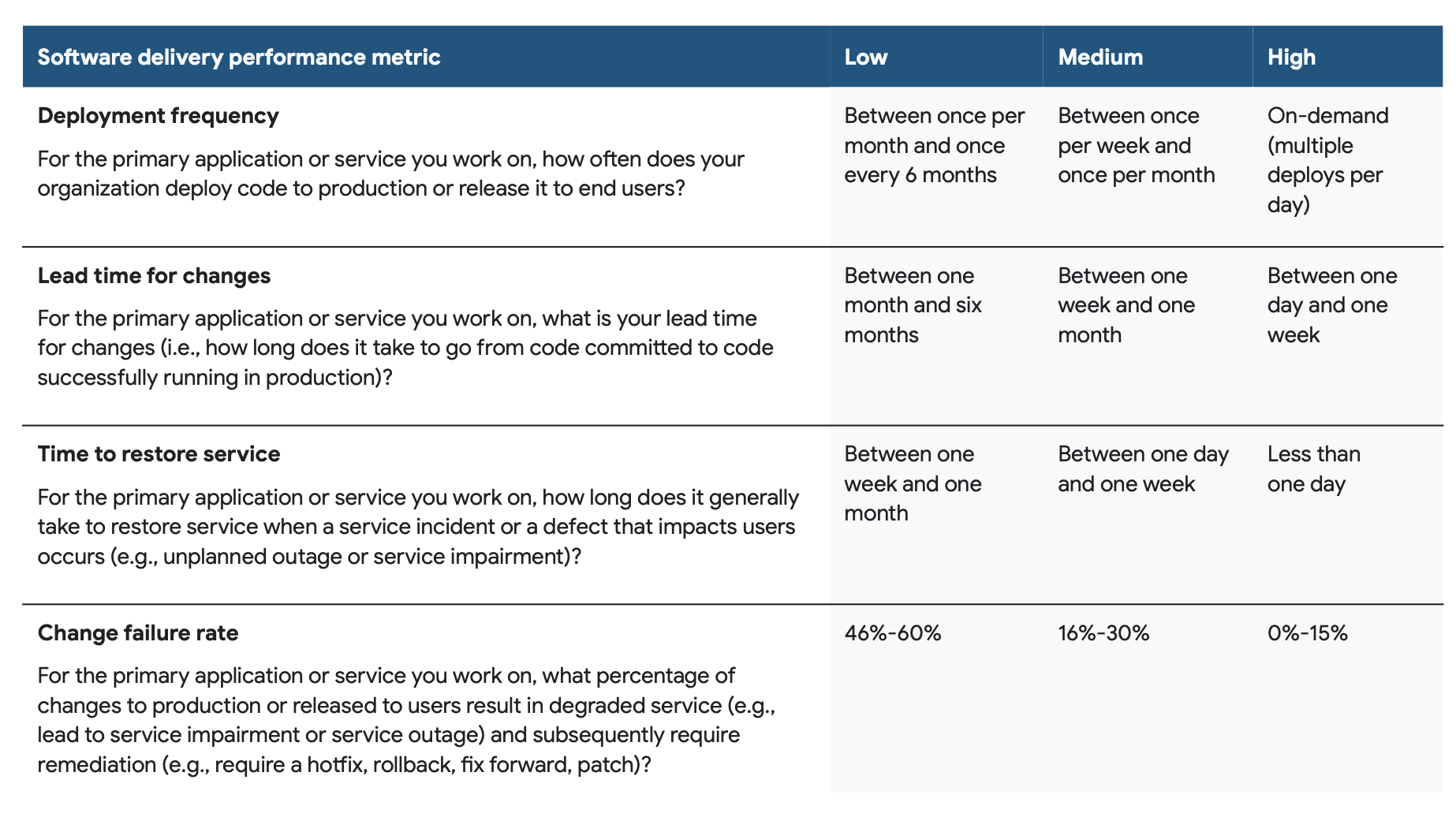

Interpreting DORA Metrics: The Scale

A performance scale based on DORA metrics distinguishes low, medium, and high performers.

- Low Performance: Slow lead times, infrequent deployments, high failure rate, and longer recovery times.

- Medium Performance: Moderate lead times, deployment frequency, failure rates, and recovery times.

- High Performance: Fast lead times, frequent deployments, low failure rates, and quick recovery.

According to the State of DevOps 2022 report, these are the performance ranges currently in the market:

DORA Metric Case Study and Tips to Improve

Capital One is a Fortune 500 company and diversified bank that offers various services to consumers, small businesses, and commercial clients. Capital One is one of the ten largest banks in the US and is known for its innovative approach to customized services and offerings. Capital One recently posted a DORA Metrics case study that can be found here.

After utilizing a DORA Metrics assessment to improve, they achieved a 20x increase in releases, with zero increase in production incidents. In addition, some applications were deployed over 30 times per day!

Capital One used two main strategies to improve its results, Trunk Based Development and automating its change control processes.

Trunk Based Development

Trunk Based Development (TBD) is a software development approach where all developers work on a single branch, often called the 'trunk' or 'main'. Instead of creating long-lived feature branches, developers integrate their code into the trunk regularly, usually at least once daily. A great resource for learning more about trunk-based development can be found here.

Here's why Trunk Based Development can lead to faster time to market, better quality, and more frequent deployments:

- Faster Time to Market: TBD encourages developers to integrate their code frequently, leading to smaller, incremental changes that can be tested and deployed to production more swiftly. By avoiding long-lived branches, TBD eliminates the time-consuming process of merging these branches back into the main code base, which can often lead to complex merge conflicts. This ultimately helps to speed up the software delivery lifecycle, leading to a faster time to market.

- Better Quality: Frequent integrations mean issues are discovered and fixed early in development. Each change is smaller, making it easier to test thoroughly and reducing the risk of unexpected side effects. Moreover, as every commit is a potential release candidate, it encourages developers to always maintain a high-quality standard in their code.

- More Frequent Deployments: With TBD, you're always working with a production-ready codebase. That means you can deploy anytime, leading to more frequent deployments. Continuous integration, a cornerstone of TBD, ensures that the main code base is always in a deployable state. This, combined with practices like automated testing and continuous delivery, allows for safe, frequent deployments.

In summary, Trunk Based Development is about minimizing the feedback loop and the batch size of changes, allowing for quicker iterations, more frequent deployments, and an overall faster and more efficient software development process.

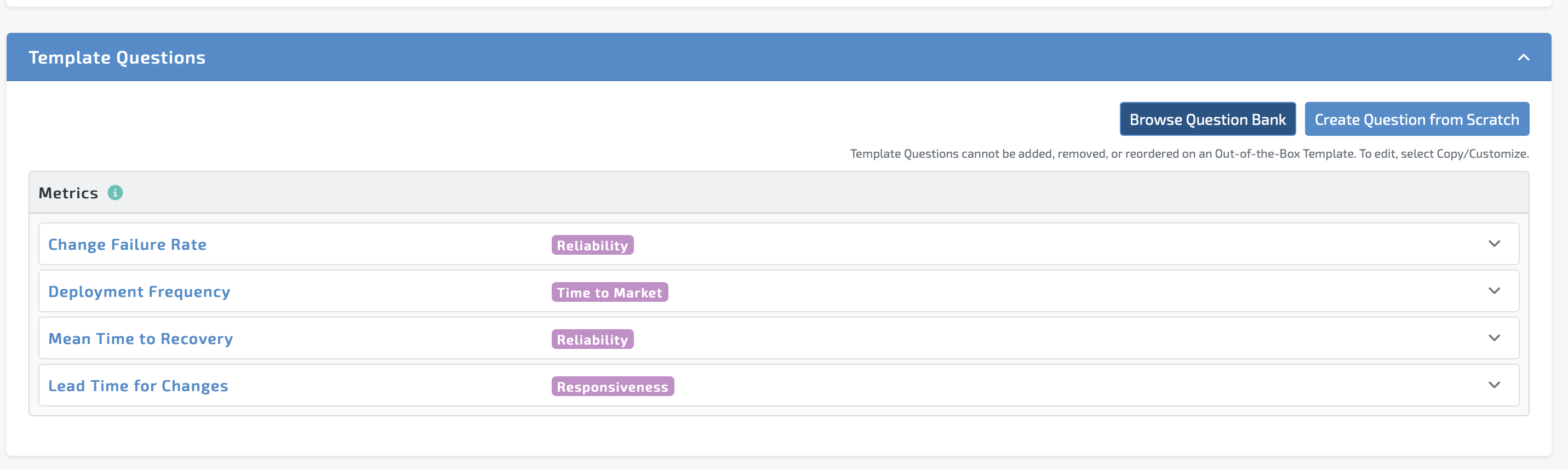

DORA Metrics Assessment Template

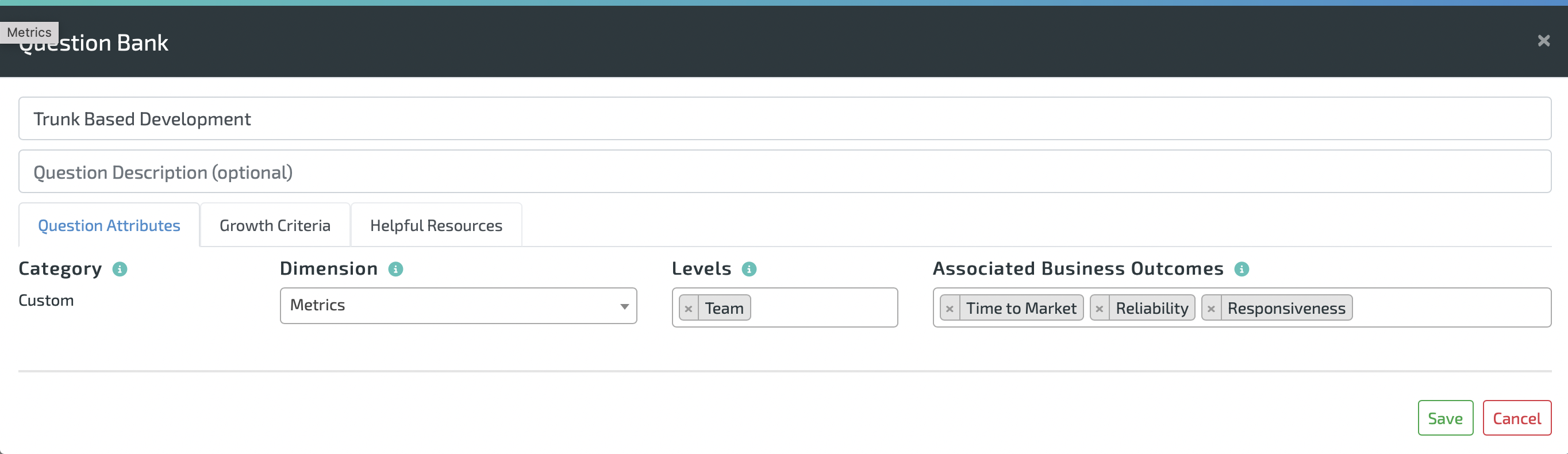

Lean Agile Intelligence provides an out-of-the-box DORA metrics assessment. The criteria are based on industry performance. LAI also provides the ability to add custom practices to any assessment. You can create your own Trunk Based Development practice and add it to the LAI DORA Metrics assessment.

How To Add Custom Practice to the DORA Metrics Assessment Template:

Go To Assessments -> Click Assessment Templates.

Select DORA Metrics, then select "View/Customize."

Scroll down to the bottom of the template, then select "Create Question From Scratch."

Choose a dimension, level, and associated business outcomes. Below is an example.

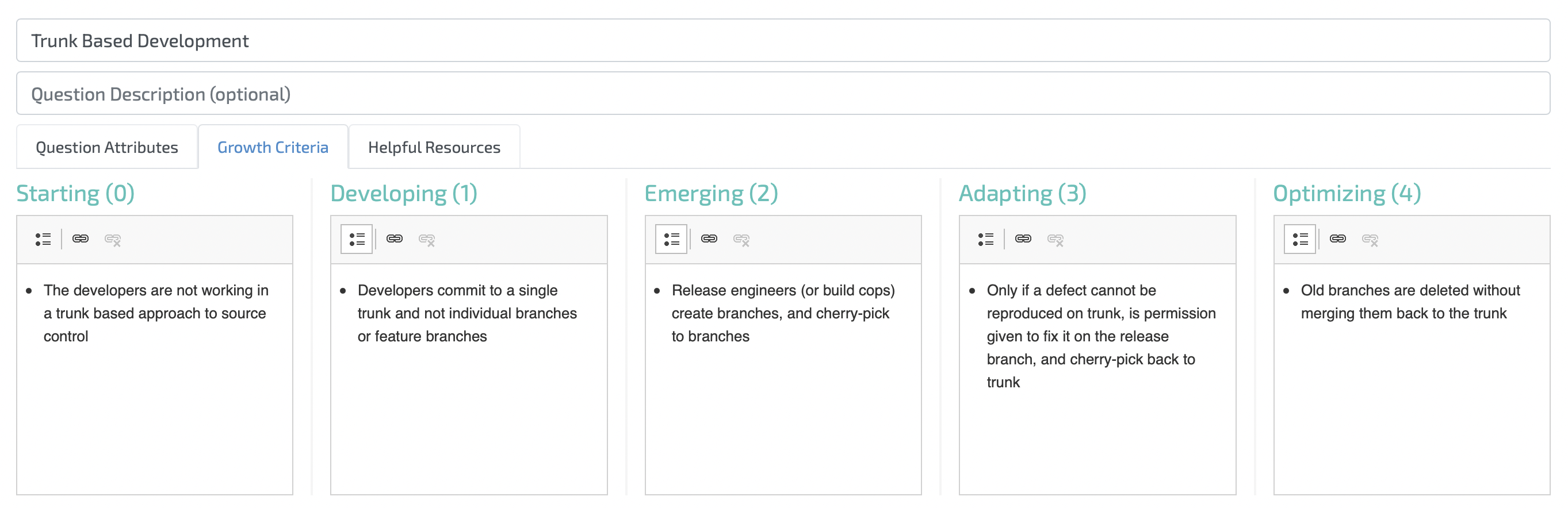

Then use the below criteria or create your own! Learn more here about Trunk Based Development.

Automating the Change Control Process

In software development, a "change control process" refers to the formal process used to ensure that any changes to a product or system are introduced in a controlled and coordinated manner. It reduces the possibility of introducing unnecessary changes to a system without forethought, leading to faults or system downtime.

The change control process is key to software development and operations risk management. The traditional process encompasses various steps, including the following:

- Change Request: Any proposed change must first be formally requested. This often involves filling out a change request form that describes the change, the reason for the change, and any potential impacts.

- Change Assessment: Once a change has been requested, it must be reviewed and assessed. This involves determining the impact of the change, the resources required, the potential benefits, and any possible risks.

- Approve or Reject: The change can be approved or rejected based on the assessment. A Change Advisory Board (CAB) often makes this decision, which includes representatives from different parts of the organization.

- Implementation: If a change is approved, it's implemented. This should be done in accordance with any specified procedures to ensure that the change is introduced correctly and safely.

- Review: After the change has been implemented, it should be reviewed to ensure its success and to learn any lessons for future changes.

While valuable for maintaining stability and reducing risk, a traditional, non-automated change control process can introduce several challenges and inefficiencies in a modern software development environment. Some problems stemming from this process include Slow delivery, Increased Risk of Human Error, Scalability Issues, Lack of Consistency, and Bottlenecks and Delays.

- Slower Delivery: Manual change control processes can be time-consuming. Each change requires manual review, approval, implementation, and verification. This can slow down the software delivery process significantly, hindering the team's ability to swiftly respond to changes in requirements, market conditions, or customer feedback.

- Increased Risk of Human Error: Humans are prone to making mistakes, especially when performing repetitive tasks. In a manual change control process, there's a higher chance of human error at every stage, from the assessment of changes to their implementation and review.

- Scalability Issues: As the number of changes and the size of the system grow, managing changes manually can become increasingly challenging. Maintaining a consistent overview of all ongoing changes and their impact on the system is harder.

- Lack of Consistency: People might have different standards or methods when managing changes manually. This can lead to inconsistencies, where similar changes are handled differently.

- Bottlenecks and Delays: Waiting for manual approvals and manual implementation can lead to significant delays. If the person responsible for approving or implementing changes is unavailable, the whole process can come to a standstill.

- Difficulty in Tracking Changes: In a manual change control process, tracking every change, who made it, and when it was made, can be difficult. This lack of traceability can pose problems for troubleshooting and compliance.

Here are some examples of how automation can be integrated into each step of the change control process:

- Change Request: While the origin of a change request might be a human identifying a need for a change, automation can still play a role here. For instance, bug-tracking tools can automatically create a change request when a certain issue is reported. Similarly, user feedback systems could be set up to generate change requests from customer suggestions automatically.

- Change Assessment: Automation can greatly speed up the process. Automated testing tools can be used to evaluate the impact of a change on the rest of the system. For example, static code analysis tools like SonarQube can automatically assess the quality of the proposed change.

- Approve or Reject: In certain cases, if the change passes all automated checks and tests, automated rules can be set up to approve the change. For instance, if a change leads to all tests passing and does not decrease code coverage or other quality metrics, it could be automatically approved for deployment.

- Implementation: This is where automation shines through Continuous Integration and Continuous Deployment (CI/CD). When a change is approved, CI/CD pipelines can automatically build, test, and deploy the changes to various environments, from development to production.

- Review: Post-deployment, automated monitoring, and logging tools can be used to evaluate the impact of changes. These tools can provide real-time data about system performance and usage, allowing teams to review the effect of changes automatically. For example, tools like Splunk or DataDog can be set up to monitor system metrics and generate automatic reports.

Conclusion

It's clear that DORA metrics are a valuable measurement of software delivery and operational performance and serve as a strategic tool for organizations striving to excel in the digital landscape. These metrics - deployment frequency, lead time for changes, change failure rate, and time to restore service - offer insight into how effectively an organization implements DevOps practices.

Understanding and improving these key metrics can provide a real competitive advantage. By highlighting the distinctions among low, medium, and high performers in DORA metrics, we've provided a clear benchmark for organizations to evaluate their current position and establish their aspirations.

The real-world case study of Capital One demonstrates how effective use of DORA metrics can lead to tangible improvements in software delivery and operational efficiency. They exemplify the power of data-driven decision-making and continual learning in software development and operations.

Understanding the impact of trunk-based development, automating change control processes, and knowing the crucial role of change control in software development and operations, brings us to the crux of effective DevOps practice. Automation is the key here. Automating change control can increase the speed and reliability of changes, maintaining control and minimizing risk, which is at the heart of change control in DevOps.

However, it's important to remember that the journey to high performance in DORA metrics is continuous. It involves an ongoing commitment to learning, adaptation, and improvement. But, with the right understanding, tools, and practices in place, any organization can utilize DORA metrics to drive excellence in their DevOps processes.

Get started with the Lean Agile Intelligence DORA Metrics Assessment today!

Frequently Asked Questions (FAQs)

What are the four DORA metrics? - The four DORA metrics are Deployment Frequency (DF), Lead Time for Changes (LT), Time to Restore Service (TRS), and Change Failure Rate (CFR).

Why are DORA metrics important in DevOps? - DORA metrics are vital as they provide quantifiable software delivery and operational performance measures. These metrics enable organizations to track their progress and continuously improve their DevOps processes.

What distinguishes high performers from low performers in terms of DORA metrics? - High performers deploy more frequently, have shorter lead times, quicker service restoration, and lower change failure rates than low performers.

How can organizations improve their DORA metrics? - Organizations can improve their DORA metrics by adopting best practices such as increasing code quality, streamlining the automation process, using trunk-based development, automating change control processes, and moving to the cloud.

What is the role of automation in improving DORA metrics? - Automation is critical in improving DORA metrics by increasing the speed, efficiency, and reliability of software delivery processes, reducing the risk of human error, and providing greater consistency and traceability.