There’s no shortage of AI content right now.

Every week, there’s a new list of “must-use tools,” a new framework, a new take on how AI is going to transform everything.

But when you step back and ask a simple question:

“What actually works inside an organization?” the answers get a lot less clear.

Most of what’s out there is either:

- too high-level to be actionable

- too tactical to scale

- or too disconnected from real business outcomes

So we put together a list of AI resources that we found valuable when we came across them while building three AI assessments focused on how organizations and teams actually use AI in practice:

- AI Readiness

- AI Enablement & Productivity

- Delivery AI Enablement

👉 Explore the AI Assessments Beta Program

In order to build those, we had to go beyond surface-level insights.

We needed to understand:

- What does effective AI usage actually look like in practice?

- Which behaviors and practices are repeatable across teams?

- What moves organizations from experimentation to consistent delivery?

- Where does AI actually improve productivity, not just perception?

This isn’t a list of what’s trending. It’s a collection of resources that hold up under real-world use and support meaningful AI adoption at scale.

How We Filtered These Resources

Not all AI resources are created equal. To make this useful, we applied a simple filter:

Would this actually help a team or organization use AI more effectively?

Here’s what we looked for:

- Practical, Not Just Theoretical: We prioritized resources that show how AI is used in actual workflows—whether that’s writing better prompts, improving product discovery, or integrating AI into delivery processes.

- Scales Beyond the Individual: There’s a lot of content focused on individual productivity, but organizations don’t scale on individual hacks. We looked for resources that support: shared practices, consistent usage, and repeatable patterns across teams

- Backed by Credible Sources: We prioritized resources from organizations that are actively building, researching, or implementing AI at scale, not just commenting on it.

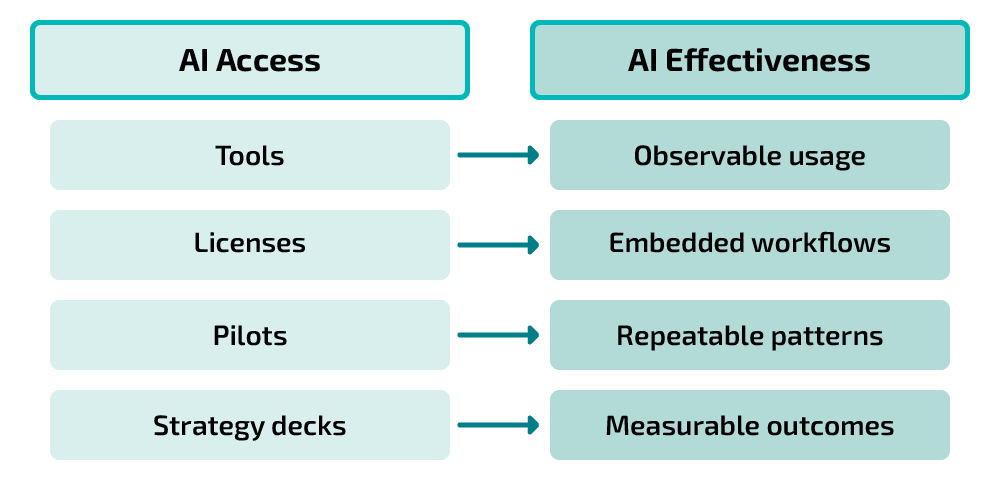

Taken together, this filtering helps shift the focus from AI exploration to AI effectiveness. Because successful AI adoption isn’t about access to tools. It’s about how those tools are used, embedded, and sustained across an organization.

Curated AI Resources by Capability

What became clear during our research is that AI success isn’t driven by a single tool or tactic.

It’s built through a set of connected capabilities. The resources below are grouped based on those capabilities, the same areas we had to understand in order to design assessments that reflect how AI is actually used in organizations.

AI Strategy & Use Case Discovery

AI initiatives rarely fail because of technology. They fail because teams start with the wrong problems. These resources help identify high-value use cases and align AI efforts to real business outcomes.

- Identifying and Scaling AI Use Cases — OpenAI

- A practical guide to finding high-impact AI opportunities and scaling them across the organization, with a focus on real business value.

- AI Business Use Cases — IBM

- A structured overview of where AI delivers value across industries, useful for grounding strategy in real-world applications.

- AI Business Use Cases & Examples — Product School

- A curated set of emerging use cases with practical examples across product, operations, and customer experience.

- How to Identify AI Use Cases That Align with Business Goals — Vation Ventures

- Breaks down how to connect AI initiatives to strategic priorities and avoid disconnected experimentation.

- Evaluate and Prioritize an AI Use Case — Microsoft

- A framework for assessing feasibility and impact to prioritize the right AI initiatives.

- The 4 Steps to Building an Effective AI Strategy — Stanford

- A concise approach to turning AI ambition into an actionable, outcome-driven strategy.

AI Understanding (Demystifying AI)

Misunderstanding AI is one of the biggest blockers to adoption. These resources build a clear, practical understanding of how AI works so teams can use it effectively and confidently.

- What Is ChatGPT Doing… and Why Does It Work? — Stephen Wolfram

- A deeper conceptual explanation of how AI models work, helping build a solid mental model beyond surface usage.

- Generative AI for Everyone — DeepLearning.AI

- A beginner-friendly course covering core concepts and real-world applications without requiring technical background.

- Generative AI for Beginners — Microsoft

- A structured series introducing foundational AI concepts with practical examples.

- ChatGPT for Any Role — OpenAI Academy

- Shows how AI can be applied across roles, helping teams identify practical use cases.

- Generative AI in a Nutshell — YouTube

- A quick, high-level overview to build intuition around how generative AI works.

AI Prompting & Effective Usage

The same tool can produce very different outcomes depending on how it’s used. These resources focus on making AI interaction intentional, consistent, and repeatable.

- Prompting — OpenAI Academy

- A foundational guide to prompting with practical examples for day-to-day use.

- Prompt Engineering White Paper — Google

- A comprehensive breakdown of prompt design principles and patterns.

- Prompt Engineering Guide — PromptingGuide.ai

- A deep library of prompting techniques for both beginners and advanced users.

- Prompt Engineering Best Practices — DigitalOcean

- Practical tips for writing consistent, high-quality prompts.

- Prompt Design Strategies — Google Cloud

- A structured overview of prompting approaches and optimization techniques.

AI Output Evaluation & Trust

AI is only valuable if its outputs can be trusted. These resources help teams evaluate quality, reduce risk, and build confidence in AI-assisted work.

- Evaluation Best Practices — OpenAI

- Covers how to evaluate AI outputs using structured methods, metrics, and test cases.

- Test and Evaluation of AI Models — U.S. Department of Defense

- A rigorous framework for testing AI systems in high-stakes environments.

- AI Model Testing Explained — Testomat

- Breaks down testing methods, challenges, and best practices for AI systems.

- Trustworthy AI — TechTarget

- Explains principles for building reliable and trustworthy AI systems.

- Using Generative AI Responsibly — YouTube

- A practical overview of risks, safeguards, and responsible usage patterns.

AI Governance, Ethics & Security

Governance isn’t a layer to add later, it’s part of scaling AI responsibly. These resources support safe, compliant, and sustainable AI adoption.

- What is AI Governance? — IBM

- Defines governance frameworks and how organizations ensure responsible AI usage.

- Govern AI — Microsoft

- Guidance for implementing governance across the AI lifecycle.

- Responsible AI Framework — Harvard

- Outlines key principles for ethical and accountable AI systems.

- AI Ethics Recommendation — UNESCO

- A global standard covering fairness, transparency, and oversight.

- 5 GenAI Security Mistakes to Avoid — Google Cloud

- Highlights common risks and how to avoid them.

- AI Data Security Guide — BigID

- Focuses on securing AI data pipelines and managing risk.

AI in Product & Delivery (Product + Engineering)

This is where AI becomes part of real work. These resources show how AI integrates into product discovery, development, testing, and delivery workflows.

- Product in the AI Era — SVPG

- Explains how product management is evolving in an AI-first world, with a focus on outcomes over features.

- Your Guide to AI Product Discovery — Product School

- Shows how AI can accelerate research, ideation, and validation in product discovery.

- AI Product Management Playbook — Pendo

- A practical guide to embedding AI into product workflows from discovery through delivery.

- How AI Code Generation Works — GitHub

- Breaks down how AI coding assistants actually work, helping teams use them more effectively.

- ChatGPT for Engineering Teams — OpenAI

- Real-world use cases showing how engineering teams apply AI across development workflows.

- AI Code Guide — Automata

- An open-source guide for integrating AI into software development practices and workflows.

AI Impact Measurement & ROI

Adoption without measurement leads to unclear value. These resources help teams assess whether AI is improving productivity, outcomes, and efficiency.

- AI Measurement Framework — LinearB

- A structured approach to measuring AI performance, adoption, and engineering impact.

- Measuring AI Adoption & ROI — Wrench AI

- Explains how organizations can track AI adoption and tie it back to business value.

- AI ROI Metrics That Matter — Authority AI

- Breaks down key metrics for evaluating whether AI is actually delivering results.

- ROI of AI — DataCamp

- Covers drivers, KPIs, and common challenges in measuring AI impact.

- AI Product Analytics — Moesif

- Focuses on using AI-driven insights to improve product decisions and measurable outcomes.

AI Workflow Integration & Automation

AI creates value when it’s embedded into how work happens. These resources focus on integrating AI into workflows and enabling consistent, scalable usage across teams.

- AI in the Workplace — McKinsey

- Explores how organizations move from experimentation to scaled AI adoption across teams.

- AI Integration into Workflows — Zapier

- A practical guide to embedding AI into existing tools and processes.

- AI Workflow Automation — Atlassian

- Explains how AI enhances collaboration and streamlines team workflows.

- How to Choose Tasks to Automate with AI — ProductTalk

- Helps teams identify high-value automation opportunities with real examples.

- Task Automation Fundamentals — IBM

- Covers the core concepts behind automation and how it drives operational efficiency.

- How to Bring AI into Your Workflows — MS Advocate

- A step-by-step approach to integrating AI into day-to-day work without heavy complexity.

How These Capabilities Connect

Individually, each of these areas matters. But the real value comes from how they work together.

AI isn’t a single capability. It’s a system.

- Strategy defines where to focus

- Understanding enables better usage

- Prompting drives quality outputs

- Evaluation builds trust

- Governance ensures responsible use

- Product and engineering integrate AI into real work

- Measurement connects everything to outcomes

- Workflow integration makes it sustainable

When one of these is missing, things start to break down.

You might have great tools but no clear direction. Strong experimentation but no consistency. Early success but no way to scale.

👉 This is why many organizations feel stuck.

Not because they lack AI access, but because the capabilities needed to use it effectively aren’t fully in place.

Where to Start Turning Insight Into Action

For leaders looking to move forward, the goal isn’t to do everything at once. It’s to create clarity and focus.

A simple place to start is by joining our upcoming free webinar:

Build AI Capabilities with Intent, Focus, and Speed

🗓 May 7th at 12 PM ET

We’ll walk through how leading organizations are turning AI from experimentation into real, measurable productivity.

If you’re not available on the day, or you’re ready to go deeper, we’ve also been working on something to help with exactly this challenge.

Our AI Assessments Beta Program is designed to help organizations understand:

- where they stand today

- where gaps exist

- and where to focus next

Because ultimately, success with AI isn’t about knowing more. It’s about using it effectively to drive meaningful outcomes.

👉 Explore the AI Assessments Beta Program

What’s Next

This is just the starting point.

In upcoming posts, we’ll go deeper into what it actually takes to move from AI exploration to real effectiveness, including practical approaches to prompting, identifying and prioritizing high-value use cases, and measuring AI impact in a way that connects to outcomes.

We’re also curating more focused resource collections, including AI resources for engineers and AI resources for product managers, built around how AI shows up in real workflows.

If you want those as they’re released, follow our newsletter to stay updated.