The productivity gains from AI are becoming undeniable. Early adopters are completing tasks faster, generating more output, and finding new ways to accelerate their work, regardless of where they fall within an AI maturity model. As organizations continue to invest in AI, the real question is not whether AI is being adopted or what AI maturity model are you using.

It is this:

What type of impact is AI actually having?

How effective is your AI adoption?

In response, AI maturity models, AI readiness assessments, and broader AI assessments have become popular ways to assess progress, create alignment, and guide next steps in AI enablement efforts.

There are many models out there, a majority of which are used to promote AI enablement services. In reviewing them, a common shortcoming stands out. They do not clearly show how effective AI adoption is at driving real, measurable impact. Isn’t that what we care about? I know executives do!

A strong AI maturity model addresses this directly by making that effectiveness visible.

At its core, a good AI maturity model should:

-

Reflect on how AI adoption is showing up in real work

-

Be grounded in observable evidence

-

Embed meaningful outcomes into the model

-

Drive clear, actionable next steps

When these elements are in place, an AI maturity model becomes more than an assessment. It becomes a tool for understanding and improving the effectiveness of AI enablement.

Is AI Better Enabling How Work Gets Done in Your AI Maturity Model?

The most effective AI maturity models and any meaningful AI assessment are grounded in execution, not abstraction. This is what separates surface-level AI maturity from real, operational impact.

For example, a strong model looks at how AI is being used to better enable:

-

Coding and development tasks

-

Documentation creation and maintenance

-

Automation of routine tasks and workflows

This level of specificity matters.

AI does not create value in strategy documents or readiness plans. It creates value by better enabling tasks and increasing team productivity.

An AI maturity model that captures how AI is being applied to better enable real work makes it possible to move beyond general AI readiness and understand how effectively AI is being used across workflows. It shifts the conversation from “Are we adopting AI?” to “Where and how is AI actually improving the way we work?”

Join the Beta Program and Get Access to AI Assessments

Is AI Adoption Observable and Evidence-Based?

One of the biggest challenges with many AI maturity models and AI assessments is that they rely too heavily on perception.

Teams are often asked to evaluate themselves using degree-based language like:

-

“Sometimes”

-

“Often”

-

“Rarely”

The issue is that these words are open to interpretation. What “sometimes” means to one team can be very different from what it means to another. As a result, scores become inconsistent, difficult to compare, and often misleading.

Instead of creating clarity, these models create debate.

Teams end up spending more time aligning on what “sometimes” means than addressing the real question:

Are we actually doing this or not? And if not, how do we get better at it?

A strong AI maturity model removes this ambiguity by grounding maturity in what can be observed and verified.

It looks for tangible signals such as:

-

AI disclosures in work artifacts

-

AI references in work items, such as tickets and tasks

-

Shared prompts, patterns, or reusable approaches

-

AI references in deliverables and outputs

These signals provide clear evidence of how AI adoption is showing up in practice. They make it possible to see whether AI enablement is applied consistently, repeatably, and at scale across teams.

Without this level of evidence, maturity becomes subjective and difficult to trust.

With it, organizations can move beyond assumptions and clearly understand how AI adoption is taking shape and where to focus next as part of their broader AI readiness and AI enablement efforts.

Join the Beta Program and Get Access to AI Assessments

Are Outcomes Embedded in the AI Maturity Model?

AI adoption is not the goal. Impact is!

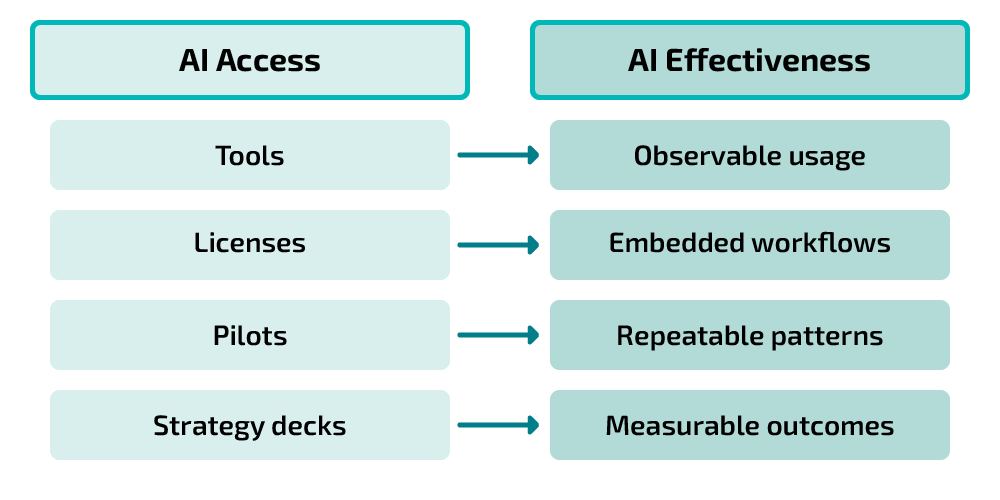

One of the biggest risks organizations face is mistaking increased AI usage for real progress. More tools, more prompts, and more experimentation can create the appearance of advancement without actually improving results.

A strong AI maturity model and any effective AI assessment do not treat outcomes as a separate layer. They embeds outcomes directly into the model.

This means maturity is not just defined by how AI is used, but by whether it is driving measurable improvements, such as:

-

Faster delivery and reduced cycle times

-

Improved quality and fewer defects

-

Less rework and more efficient workflows

-

Increased productivity across teams

By embedding these outcomes into the model, organizations create clarity around what “good” actually looks like.

It also changes how teams approach improvement.

When teams know they are being measured not just on adoption, but on measurable impact, they become more intentional in how they apply AI. The focus shifts from experimenting with tools to improving results.

This is where AI enablement becomes effective.

Instead of asking, “Are we using AI?”, teams begin asking:

Is this improving how we deliver?

Is this making us more productive?

That shift drives better decisions, more focused improvements, and ultimately stronger outcomes.

An AI maturity model that embeds outcomes enables measurement not just of progress but of effectiveness, providing a clearer view of how AI adoption contributes to overall AI readiness and business impact.

Join the Beta Program and Get Access to AI Assessments

Does the Model Provide a Clear Path Forward and Drive Action?

A maturity model should do more than describe the current state. It should make the improvement clear and actionable.

This means showing how AI adoption evolves in ways that reflect how organizations actually work. The early stages may involve inconsistent use and individual experimentation. Over time, this progresses to shared approaches, more consistent application, embedding AI into workflows, and driving outcomes that are reflected in performance metrics.

Each stage should represent a meaningful shift, making it easier for teams to understand what to focus on next.

Just as importantly, the model should make it clear:

-

Where gaps exist

-

What capabilities need to improve

-

Which areas will drive the greatest impact

-

How to go about improving them

That last point is critical.

Without guidance on how to improve, teams know where they stand, but not what to do next. A strong AI maturity model provides direction, helping teams translate insights into specific actions that improve how AI is applied in their work.

It should not leave teams asking, “Now what?”

It should make the next step obvious.

When done well, the maturity model becomes more than just a way to assess AI maturity. It becomes a tool for guiding improvement and accelerating AI enablement across the organization.

Where to Start Turning Insight Into Action

For leaders looking to move forward, the goal isn’t to do everything at once. It’s to create clarity and focus.

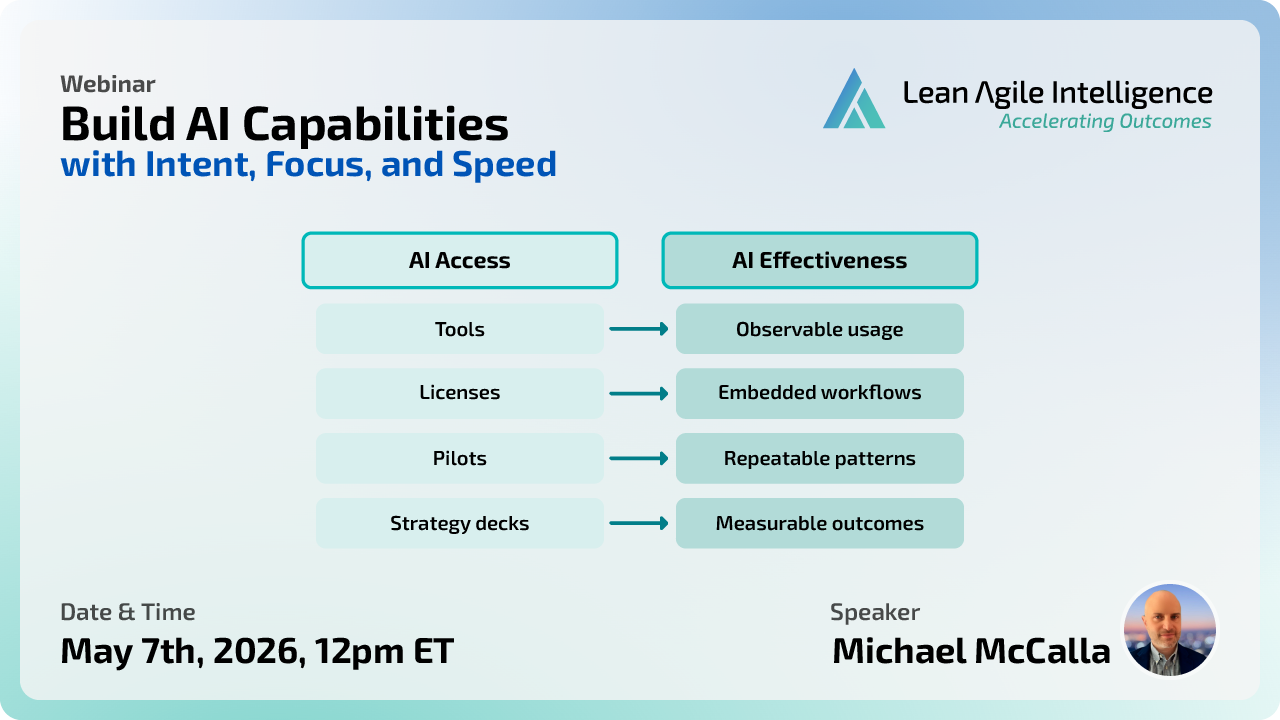

A simple place to start is by joining our upcoming free webinar:

Build AI Capabilities with Intent, Focus, and Speed

🗓 May 7th at 12 PM ET

We’ll walk through how leading organizations are turning AI from experimentation into real, measurable productivity.

If you’re not available on the day, or you’re ready to go deeper, we’ve also been working on something to help with exactly this challenge.

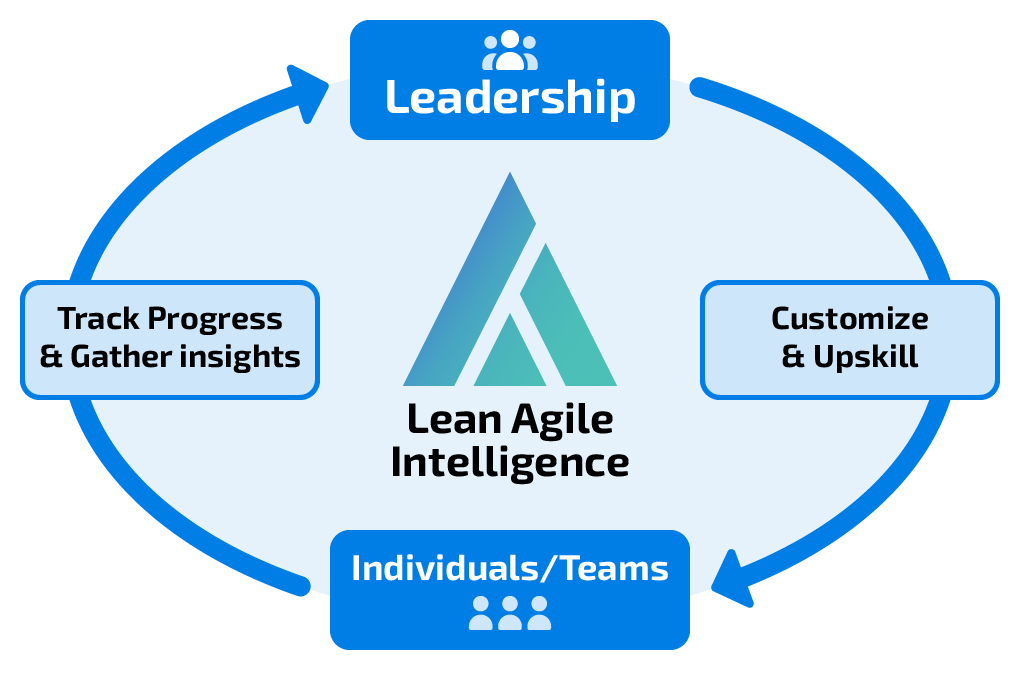

Our AI Assessments Beta Program is designed to help organizations understand:

- where they stand today

- where gaps exist

- and where to focus next

Because ultimately, success with AI isn’t about knowing more. It’s about using it effectively to drive meaningful outcomes.

👉 Explore the AI Assessments Beta Program

Final Thought

AI is evolving quickly, and organizations are under pressure to turn experimentation into real results.

In that environment, clarity becomes a competitive advantage.

A strong AI maturity model provides that clarity by focusing on how AI adoption is showing up in real work, grounding assessment in observable evidence, embedding outcomes into the model, and guiding teams toward meaningful improvement.

Because ultimately, the goal is not to be mature in AI.

The goal is to get better results from how AI is used every day.

Check out our AI Assessment Beta Program and gain insight into how effective your AI adoption is in day-to-day work, where it is driving productivity gains or being limited by capability gaps, unclear expectations, and governance risks.